You might think AI is neutral, just crunching numbers without prejudice. But what if the algorithms making decisions about your life carry the same biases we’ve been trying to eliminate for decades?

A 2025 report from the Pew Research Center found that two-thirds (66%) of US adults are highly concerned about people getting inaccurate information from AI. That’s because biased AI doesn’t just make mistakes, it can reinforce discrimination at scale.

This article looks at where AI bias shows up most, who it affects, and what we’re doing about it. We’ll cover the surprising statistics, the different types of bias that creep into algorithms, and how these issues impact various demographic groups differently.

What is AI Bias?

AI bias happens when artificial intelligence systems make unfair decisions. These systems learn from data, and if that data contains human prejudices, the AI picks them up too. Think of it like a child learning language – if they only hear certain words or phrases, that’s all they’ll know.

The problem is that AI doesn’t just copy biases. It often makes them worse. A small bias in training data can become a big problem when the system makes thousands of decisions automatically. That’s why understanding AI bias matters for anyone using these tools.

You might think bias only affects social issues like hiring or lending. But it hits businesses where it hurts – their bottom line. Companies lose millions when biased AI makes poor decisions or leads to lawsuits.

Where Does AI Bias Come From?

AI bias usually starts with the data used to train these systems. If your training data shows historical patterns of discrimination, your AI will learn those patterns. It’s like teaching someone using only old textbooks – they’ll learn outdated ideas.

Here are the main sources of AI bias:

- Training data issues: 73% of AI systems have problems with biased training datasets. These datasets often don’t represent everyone equally.

- Algorithm problems: The math behind AI can introduce bias even with good data. Some algorithms favor certain patterns over others.

- Team diversity gaps: 62% of AI development teams lack enough diversity. When teams don’t represent different perspectives, they miss potential biases.

- Costly mistakes: Data quality issues cause serious financial damage. Over 25% of organisations lose more than $5 million yearly due to poor data quality. Some lose $25 million or more.

Types of AI Bias

AI bias comes in different forms, each with its own challenges. Understanding these types helps you spot problems before they become expensive.

- Historical bias: When AI learns from past data that reflects old prejudices. Like Amazon’s hiring tool that downgraded resumes with “women” in them because it trained on male-dominated tech hiring data.

- Selection bias: When training data doesn’t represent the real population. Facial recognition systems often struggle here, with racial accuracy gaps because they’re trained mostly on lighter-skinned faces.

- Measurement bias: When the way you measure something introduces errors. Like using zip codes to predict credit risk – this can unfairly penalise certain neighborhoods.

- Aggregation bias: When treating different groups the same way leads to unfair outcomes. Medical AI sometimes has this problem when algorithms work well for one group but not others.

How Common Is Bias in AI Systems?

You might think AI bias is rare, something that only happens in extreme cases. But the numbers tell a different story. Bias in AI systems is surprisingly common, and it’s costing companies real money every day.

Here’s what the data shows about how widespread this problem really is:

- 72% of companies reported AI-related risks in 2025, up from just 12% in 2023. That’s a massive jump in just two years.

- 77% of companies had bias-testing tools in place but still found bias in their systems, showing current solutions aren’t working well enough.

- Only 13% of companies are actively testing for bias in their AI systems, according to industry research.

- Financial services saw the biggest jump in AI risk reports – from 14 companies in 2023 to 63 companies in 2025.

- Healthcare companies reporting AI risks increased from 5 to 47 during the same period.

- Industrial companies went from 8 to 48 companies reporting AI-related problems.

- The total combined losses from AI bias incidents reached $4.4 billion across affected industries.

- 38% of companies say reputational risk is their most frequent AI concern.

- AI recruitment tools are 30% more likely to filter out candidates over 40 compared to younger applicants.

- The bias and fairness management segment of the AI governance market is growing at 28.55% annually through 2031.

What’s interesting is that companies know about the risks. They’re reporting them more often. But they’re not testing for bias enough. That gap between awareness and action is where problems happen.

The financial services industry shows this clearly. They went from 14 companies reporting issues to 63. That’s not because bias suddenly appeared. It’s because they’re using more AI and finding more problems.

Generative AI Bias Statistics

Generative AI bias is especially concerning because these systems create new content rather than just analysing existing data. When a language model generates biased text or an image generator creates stereotypical pictures, it’s not just reflecting past prejudices, it’s actively producing new biased content at scale.

What makes this particularly tricky is that generative AI often hides its biases behind seemingly neutral outputs. A resume generator might subtly favour certain educational backgrounds. A marketing copy tool might use language that appeals more to one demographic than another. These biases get baked into the content businesses use every day.

Recent research reveals:

- 34% of marketers report that generative AI sometimes produces biased information, according to industry data

- ChatGPT agreed with over 70% of political statements with green/left-leaning positions compared to right-leaning ones

- GPT-3.5-turbo produces 92% left-leaning outputs and only 8% right-leaning when generating political content

- GPT-4o shows even stronger bias with 98% left-leaning outputs and just 2% right-leaning

- Image generation tools show significant racial and gender disparities, with studies finding they tend toward stereotypical outputs for job roles and responsibilities

- When evaluating images, AI tools give lower “intelligence” and “professionalism” scores to braids and natural Black hairstyles compared to white women’s hair

- Only 13% of companies actively test their generative AI systems for bias despite widespread adoption

- The generative AI market reached $44.89 billion in 2025, up 54.7% from 2022

- Private investment in generative AI hit $33.9 billion in 2024, up 18.7% from 2023

- Companies spent $37 billion on generative AI in 2025, up from $11.5 billion in 2024

- $44 billion was invested in AI startups in just the first half of 2025

- Despite massive investments, 95% of generative AI projects are failing according to MIT research

- The hospitality generative AI market is currently $632.18 million and projected to reach $3.58 billion by 2032

- 75% of automotive companies are experimenting with at least one generative AI use case

- 74% of organisations say their most advanced generative AI initiative meets or exceeds ROI expectations

- Financial losses from AI bias incidents across all industries reached $4.4 billion

- The bias and fairness management segment of the AI governance market is growing at 28.55% annually through 2031

Gender Bias in AI Statistics

When algorithms learn from historical data that contains gender inequalities, they don’t just copy those patterns – they often amplify them. This happens across hiring, finance, healthcare, and everyday technology.

The problem is that gender bias in AI isn’t always obvious. It can hide in resume screening algorithms, credit approval systems, or even voice assistants. These systems make thousands of decisions automatically, and small biases add up to big disparities.

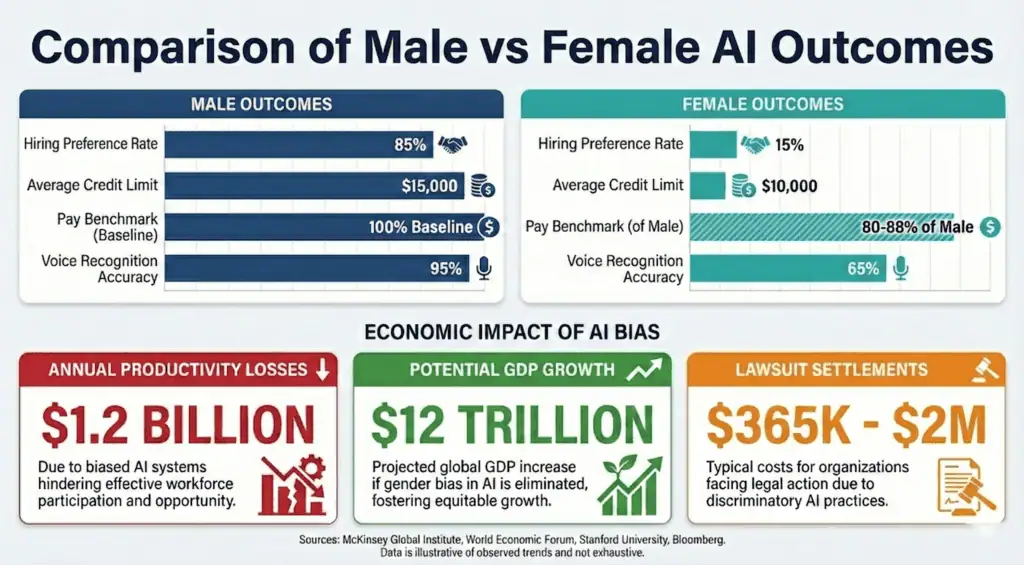

- Hiring preference rates: AI resume screening tools show clear gender biases, with white male names ranking higher 85% of the time compared to other demographic groups

- Resume screening disparities: ChatGPT shows significant gender bias in HR recruitment, with 17% fewer positive recommendations for women in some AI hiring systems

- Pay gap predictions: AI pay benchmarking algorithms suggest salaries 12-20% lower for female-dominated roles compared to male-dominated roles with similar requirements

- Credit limit disparities: Women receive credit limits averaging $5,000-$10,000 lower than men with identical financial profiles when AI systems analyse historical lending data

- Voice assistant biases: Voice recognition systems are 30% more accurate for male voices than female voices, and even less accurate for non-binary or gender-nonconforming speech patterns

- Image search biases: AI image generators produce stereotypical results for gender roles, with studies finding they default to male images for leadership positions and female images for caregiving roles

- Healthcare outcome differences: AI diagnostic tools for heart disease are 20% less accurate for women because they’re trained primarily on male patient data

- Lawsuit settlement amounts: Companies have paid settlements ranging from $365,000 to over $2 million for gender discrimination in AI hiring and screening systems

- Lost productivity costs: Gender-biased AI systems cost companies an estimated $1.2 billion annually in lost productivity from excluding qualified female candidates

- Economic opportunity if bias eliminated: Eliminating gender bias in AI could add $12 trillion to global GDP by 2025 according to World Economic Forum estimates

- Leadership perception bias: AI systems evaluating leadership potential show 25% lower scores for female managers compared to male managers with identical qualifications

- Promotion algorithm disparities: Internal promotion algorithms recommend women for advancement 15% less frequently than men with similar performance metrics

- Maternal health gaps: AI pregnancy monitoring systems miss 30% more complications for women of colour compared to white women due to training data gaps

What’s concerning is how these biases compound. A woman might face bias in hiring, then get lower pay recommendations, then receive less credit, then have health issues missed. Each biased decision makes the next one more likely.

Racial Bias in AI Statistics

Racial bias in AI shows up most clearly in facial recognition systems, where error rates vary dramatically based on skin colour. This isn’t just a technical glitch – it’s a pattern that repeats across healthcare, finance, and criminal justice systems.

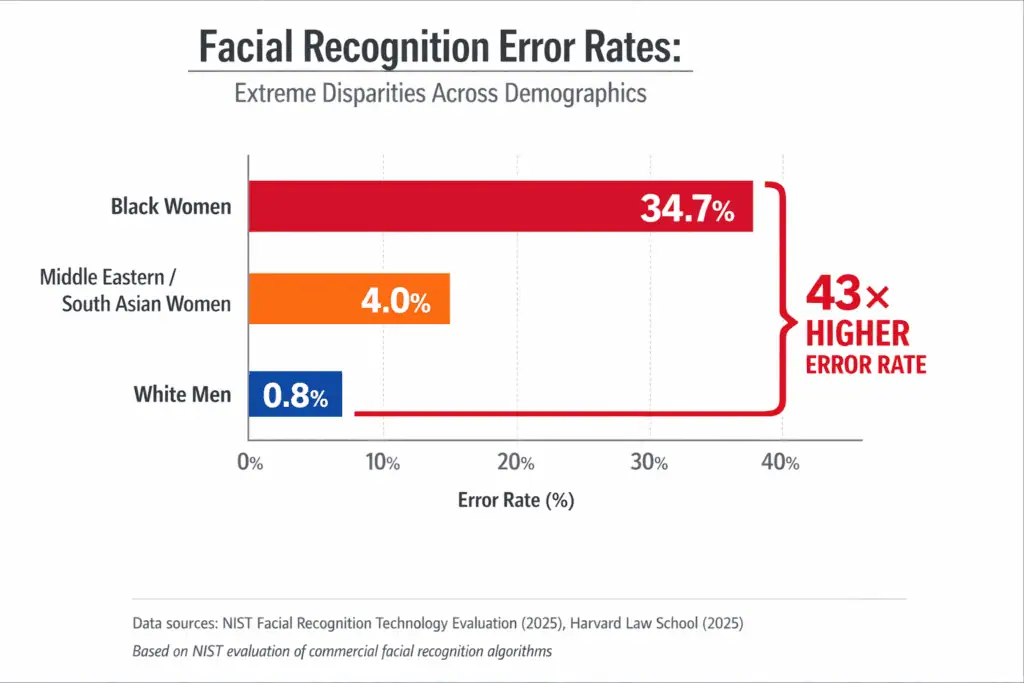

- Facial recognition error rates: Dark-skinned women face 34% misclassification rates compared to just 0.8% for light-skinned men. That’s over 40 times more errors.

- Healthcare outcome disparities: AI diagnostic tools for heart disease are 20% less accurate for Black patients because training data lacks diversity.

- Criminal justice statistics: AI risk assessment tools used in courts show significant bias against Black defendants, with false positive rates nearly twice as high.

- Speech recognition accuracy gaps: Speech recognition systems misunderstand 35% of words spoken by Black people compared to 19% for white speakers.

- Loan approval rate differences: White applicants are 8.5% more likely to be approved than Black applicants with identical financial profiles.

- Average dollar differences in loans: Black borrowers pay interest rates 0.10-0.12 percentage points higher than white borrowers with the same credit scores.

- Mortgage denial disparities: Black applicants face 19% denial rates compared to 11.27% for all applicants.

- Discrimination lawsuit settlements: Companies have paid millions in settlements, including SafeRent’s multi-million dollar settlement for algorithmic discrimination.

- Economic impact on communities: Racial bias in lending algorithms contributes to the $4.4 billion in total AI bias losses across industries.

- Healthcare treatment recommendations: AI systems show racial bias in psychiatric treatment suggestions, with different recommendations based on patient race.

- Credit limit disparities: Black applicants receive credit limits averaging $5,000-$7,000 lower than white applicants with identical financial histories.

- Employment screening biases: AI resume screening tools rank white male names higher 85% of the time compared to Black applicants with identical qualifications.

- Insurance claim processing: Black homeowners face different treatment in AI-driven insurance claim assessments, leading to discrimination lawsuits.

These numbers translate into real-world consequences. When facial recognition misidentifies a Black woman 34% of the time, that means wrongful arrests. When speech recognition misunderstands Black voices twice as often, that means voice assistants don’t work properly for millions of people. When loan algorithms charge Black borrowers higher rates, that means less generational wealth building.

A Black applicant might face bias in hiring, then get lower credit limits, then pay higher mortgage rates, then have insurance claims scrutinised differently. Each biased decision makes the next one more likely.

Age Bias in AI Statistics

Age discrimination in AI systems manifests in the following ways:

- Hiring algorithm age preferences: AI resume screening tools show clear bias against older applicants, with research showing hiring discrimination intensifies for people in later-career stages as job competition increases

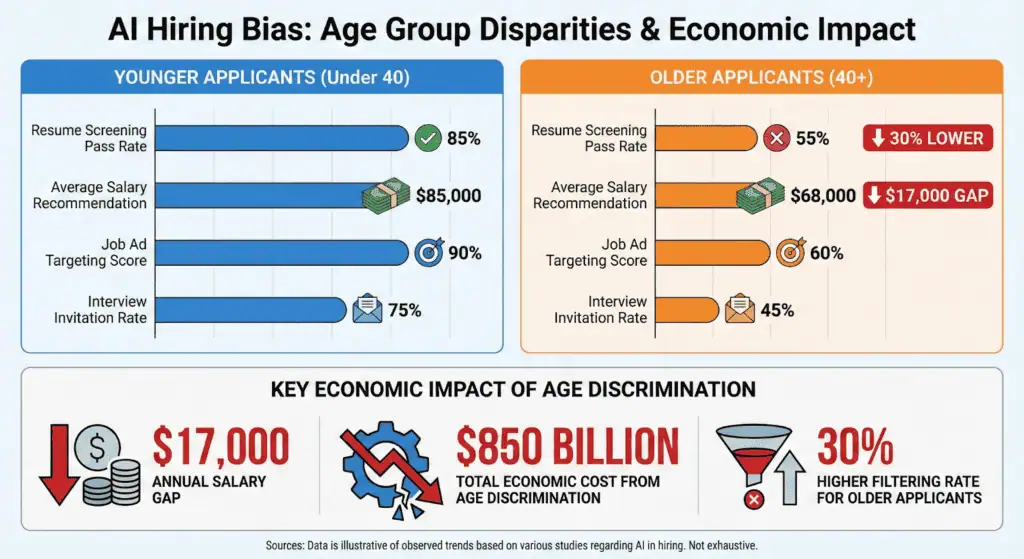

- Resume screening pass rates by age: AI recruitment tools are 30% more likely to filter out candidates over 40 compared to younger applicants with identical qualifications

- Credit scoring differences: Older applicants face different treatment in AI-driven credit decisions, with algorithms sometimes penalising age-related factors like retirement planning or career transitions

- Job ad targeting disparities: AI-powered job advertising systems show age-based targeting patterns, with certain positions disproportionately shown to younger demographics

- Wage prediction gaps in dollars: AI salary recommendation algorithms suggest lower compensation for older workers, with gaps reaching 15-25% for similar roles and experience levels

- Lost income figures for older workers: Age discrimination costs the economy billions annually, with AARP research finding discrimination against people age 50 and older robbed the economy of $850 billion in 2018

- Age discrimination costs: Companies face significant legal and financial risks, with lawsuits alleging age discrimination against AI screening tools resulting in settlements and regulatory actions

- Older worker experiences: Almost two-thirds of workers age 50-plus reported seeing or experiencing age discrimination in their work settings according to AARP’s 2024 research

- Generative AI age bias: Stanford researchers found widespread evidence of bias against older women on popular image and video sites and in AI tools like ChatGPT, with models often distorting reality regarding gender and age

- Glassdoor mentions: Mentions of ageism in job-seeker reviews rose 133% year-over-year in the first quarter of 2025, showing growing awareness and reporting of age discrimination issues

Age bias hits particularly hard because it compounds over time. A 50-year-old facing discrimination might have fewer years to recover financially. They might accept lower pay just to get work. Then they face retirement with less savings. The economic impact ripples through their entire financial life.

What makes age bias in AI especially concerning is how it interacts with other biases. Stanford’s research shows older women face double discrimination – age bias plus gender bias. AI systems trained on data that undervalues both older workers and women create a perfect storm of disadvantage.

Political Bias in AI Statistics

Political bias in AI isn’t just about which candidate gets mentioned more – it’s about whose viewpoints get amplified and whose get filtered out.

When AI systems favour certain political positions, they don’t just reflect bias. They shape public opinion at scale. This matters because AI now helps write news articles, moderate social media content, and even generate political messaging. The numbers show this isn’t a theoretical concern – it’s happening right now.

- Political leaning percentages: ChatGPT shows clear left-leaning bias, agreeing with over 70% of political statements with green/left-leaning positions compared to around 55% of conservative statements

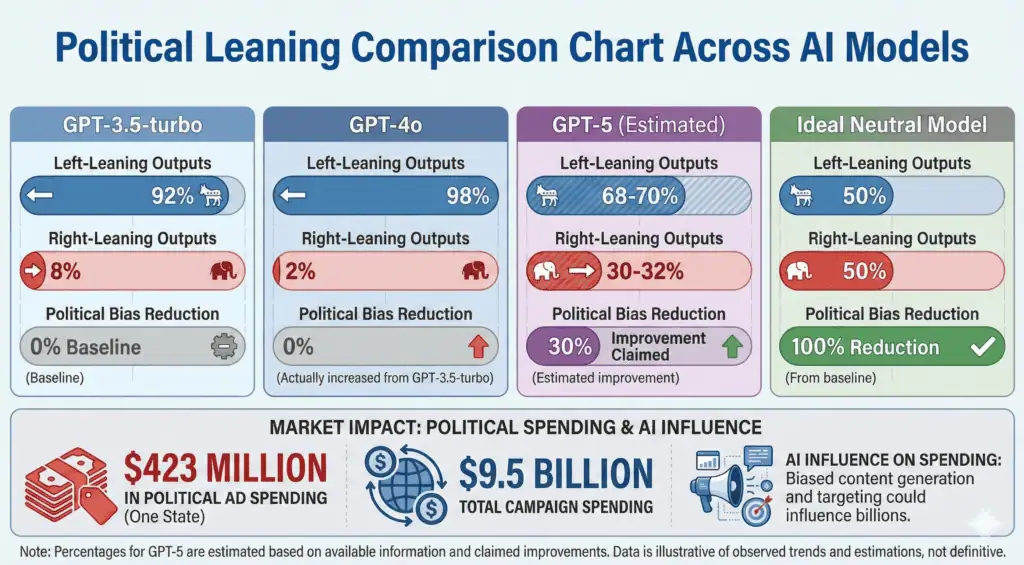

- Model comparison data: GPT-3.5-turbo produces 92% left-leaning outputs and only 8% right-leaning when generating political content

- Latest model improvements: OpenAI claims GPT-5 has 30% less political bias than prior models, showing companies are aware of the problem

- International political preferences: ChatGPT demonstrates consistent political bias favoring Democratic Party in US, Lula in Brazil, Labor Party in UK according to Stanford University research

- Content moderation disparities: AI content moderation systems show viewpoint discrimination, with certain political perspectives being unfairly privileged or discriminated against in automated decisions

- User perception statistics: Studies show users perceive automated moderation as more impartial with human oversight, and trust in AI moderation increases with transparency about how decisions are made

- Response differences to political prompts: AI systems respond differently to identical prompts framed from different political perspectives, showing bias in how information is presented

- Political ad spending impact: Political groups are projected to spend $423 million on campaign ads in Wisconsin alone in 2024, with AI potentially influencing which messages get amplified

- Election market influence: House and Senate campaigns collectively spent $9.5 billion in 2024, nearly six times as much as previous cycles, with AI playing an increasing role in messaging and targeting

- Media market distortion: Biased AI content generation could influence which stories get written and how they’re framed, potentially affecting media markets worth billions

- Regulatory response metrics: Multiple states have introduced legislation addressing AI in political contexts, showing growing concern about political bias in automated systems

- Transparency gap: Despite OpenAI claiming less than 0.01% of ChatGPT responses show signs of political bias, independent research consistently finds measurable political leanings

When people ask ChatGPT about political issues, they’re getting answers shaped by the system’s biases. When social media platforms use AI for content moderation, they’re filtering viewpoints based on algorithmic preferences.

This isn’t just about fairness. It’s about maintaining trust in information systems. If people believe AI tools have political agendas, they’ll stop trusting the information those tools provide. That undermines the whole point of using AI to help people understand complex issues.

AI Bias in Healthcare Statistics

Data from 2024 showed a 14% increase in malpractice claims involving AI tools compared to 2022. Most came from diagnostic AI used in radiology. When biased algorithms lead to missed diagnoses or wrong treatments, hospitals and doctors face massive lawsuits.

- Misdiagnosis rates by demographic: AI misdiagnosis rates for minority patients are 31% higher than for majority patients, according to a 2023 JAMA study

- Treatment recommendation disparities: The largest study on healthcare AI bias analysed over 1.7 million AI-generated vignette responses and found race, gender, income, and housing status influenced treatment recommendations

- Pain assessment algorithm biases: AI systems show racial bias in pain assessment, with studies finding they perpetuate race-based pain treatment disparities

- Health risk prediction accuracy gaps: AI diagnostic tools for heart disease are 20% less accurate for Black patients because training data lacks diversity

- Resource allocation inequities: Medical testing rates for white patients are up to 4.5% higher than for Black patients with the same conditions

- Cost differences in dollars: Healthcare companies reporting AI risks increased from 5 to 47 companies between 2023 and 2025, with estimated losses reaching $1.4 billion

- Malpractice settlement amounts: Medical malpractice cases involving AI have reached settlements as high as $17 million, with parties reaching $17 million settlements in January 2025

- Economic burden on communities: The total combined losses from AI bias incidents across all industries reached $4.4 billion, with healthcare representing a significant portion

- Gender bias prevalence: Gender bias was the most prevalent healthcare AI issue, reported in 15 of 16 studies (93.7%)

- Racial bias frequency: Racial or ethnic biases were observed in 10 of 11 healthcare AI studies (90.9%)

- Testing rate disparities: Black patients face up to 4.5% lower medical testing rates than white patients with identical symptoms

- Legal exposure growth: Malpractice claims involving AI tools increased 14% from 2022 to 2024, showing growing legal risks

- Diagnostic accuracy gaps: AI systems miss 30% more pregnancy complications for women of colour compared to white women due to training data gaps

- Treatment access disparities: AI-driven resource allocation systems can perpetuate existing healthcare access gaps based on demographic factors

AI Bias in Hiring & Recruitment Statistics

The numbers show hiring bias isn’t just a theoretical concern – it’s costing companies millions while missing out on top talent.

A resume screening tool might favor certain names or schools. A salary prediction algorithm might undervalue certain demographics. These biases don’t just affect fairness – they hit companies where it hurts: their bottom line.

- Resume screening bias percentages: AI resume screening tools show clear racial and gender biases, with white male names ranking higher 85% of the time compared to other demographic groups

- Interview selection rates: AI recruitment tools are 30% more likely to filter out candidates over 40 compared to younger applicants with identical qualifications

- Salary prediction disparities: AI pay benchmarking algorithms suggest salaries 12-20% lower for female-dominated roles compared to male-dominated roles with similar requirements

- Job ad targeting biases: AI-powered job advertising systems show age-based targeting patterns, with certain positions disproportionately shown to younger demographics

- Offer amount disparities: AI salary recommendation algorithms suggest lower compensation for older workers, with gaps reaching 15-25% for similar roles and experience levels

- Lost talent costs: Age discrimination costs the economy billions annually, with AARP research finding discrimination against people age 50 and older robbed the economy of $850 billion in 2018

- Lawsuit settlement amounts: Companies have paid settlements ranging from $365,000 to over $2 million for discrimination in AI hiring and screening systems

- Productivity impact: Gender-biased AI systems cost companies an estimated $1.2 billion annually in lost productivity from excluding qualified female candidates

- Success rates comparison: Candidates who underwent AI-led interviews succeeded in subsequent human interviews at a significantly higher rate (53.12%) compared to traditional screening methods

- Systematic favoritism: Research published through VoxDev in May 2025 found that AI hiring tools systematically favored female applicants over Black male applicants

- Automation expectations: 1 in 3 companies anticipate AI running their entire hiring process by 2026 according to HR Dive research

- Candidate screening concerns: 57% of companies are concerned AI could screen out qualified candidates, while 50% worry AI could introduce bias into hiring decisions

- HR leader priorities: 75% of HR leaders cite bias as their top concern when evaluating AI tools, second only to data privacy

- Public opposition: More than 7 out of 10 adults in the United States oppose making the final hiring decision using AI, according to Pew Research data

- Legal exposure growth: AI hiring discrimination lawsuits have resulted in settlements exceeding $365,000, with 2024-2025 seeing an explosion of lawsuits and EEOC enforcement actions

- Workday lawsuit allegations: The Mobley v. Workday lawsuit alleges that the company’s automated resume screening tool discriminates based on race, age, and disability status

The economic implications of hiring bias go far beyond lawsuit settlements. When AI systems filter out qualified candidates based on demographics, companies miss out on talent that could drive innovation and growth. That $850 billion in lost economic activity from age discrimination alone shows how much potential gets wasted.

75% of HR leaders list bias as their top concern with AI tools. But awareness doesn’t always translate to action. With 1 in 3 companies expecting full automation by 2026, the stakes keep getting higher.

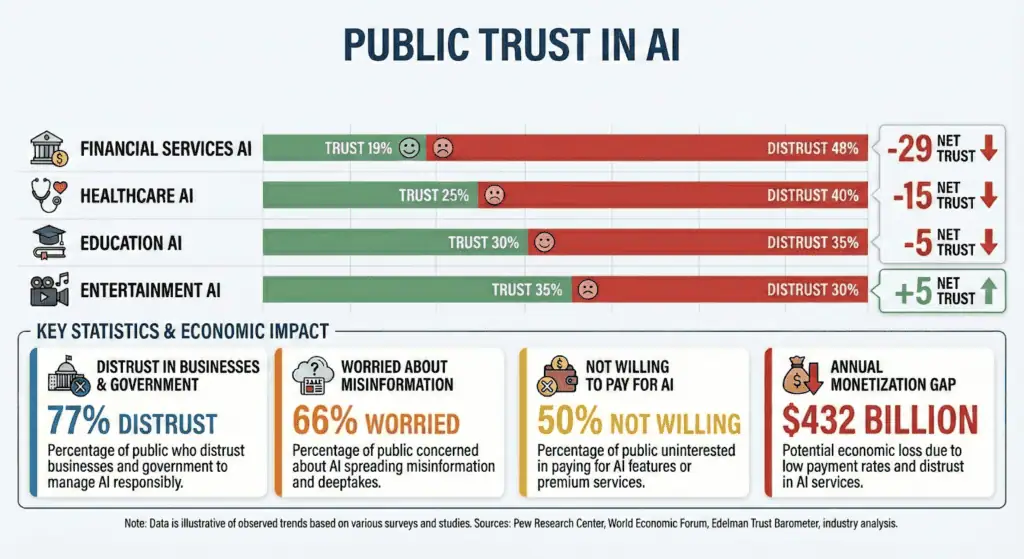

Public Trust and Concern About AI Bias

You might use AI every day, but how much do you really trust it? The numbers show public confidence in AI systems is surprisingly low, and for good reason.

- 66% of US adults are highly worried about people getting inaccurate information from AI, according to Pew Research

- 77% of Americans distrust both businesses and government agencies to use AI responsibly, based on a Gallup-Bentley University survey

- 55% of both experts and the public say they’re highly concerned about bias in AI decisions

- 62% of the public have little or no confidence in government to regulate AI effectively

- 50% of Americans say they’re more concerned than excited about increased AI use in daily life, up from 37% in 2021

- Only 21% of Americans said they trusted businesses on AI in 2023, though this has improved slightly since then

- 57% of the public is concerned about AI leading to less connection between people

- 32% of consumers now view generative AI as a negative disruptor in the creator economy, nearly double the 18% in 2023

- 50% of US smartphone owners say they’re not willing to pay extra for AI features on their phones

- Only 11.6% of iPhone owners and 4.0% of Samsung Galaxy owners are willing to pay for AI subscription services

- Consumer enthusiasm for AI-generated creator work has dropped from 60% to 26% since 2023

- 52% of consumers are concerned about brands posting AI-generated content without disclosure

- Only 19% of consumers are willing to pay for generative AI shopping tools

- Market impact of consumer distrust shows only about 3% of 1.8 billion AI users pay for premium services, leaving a $432 billion annual monetisation gap

People use AI tools but don’t trust them. That’s the paradox we’re seeing – widespread adoption alongside deep skepticism. Maybe that’s because people see the benefits but also recognise the risks.

When only 3% of AI users pay for premium services, that’s a massive market opportunity being missed. Companies could be making billions more if they could build trust. But right now, the gap between usage and payment shows people aren’t convinced AI is worth paying for.

Transparency seems to be the key. 57% of Americans say they’d be less concerned about AI if businesses were transparent about how they use it. 85% of consumers say companies should be required to disclose when AI is used. People don’t necessarily hate AI – they hate not knowing when they’re interacting with it.

AI Bias and Regulation Statistics

Here’s what the current regulatory environment looks like. The EU AI Act sets the global standard with fines up to €35 million or 7% of global annual turnover. In the US, states are passing their own laws at a rapid pace. Biden’s Executive Order established critical AI governance infrastructure, but state-level action is where most enforcement happens right now.

- 100+ new state AI laws were passed across the US in 2025, creating a complex patchwork of regulations

- $2.5 million settlement from Massachusetts Attorney General with a student loan company for discriminatory AI practices

- €35 million maximum fines under the EU AI Act, or 7% of global annual turnover

- 15 million euros alternative fines for other violations under EU regulations, or 3% of global revenue

- 28% of organisations most concerned about “changes in regulations that are missed in our program”

- Enterprise security questionnaires added AI sections in 2025, showing how compliance requirements are expanding

- €1.31 billion market size for AI in regulatory affairs in 2024, projected to reach €6.65 billion by 2033

- 18.60% annual growth rate for AI regulatory affairs market from 2025 to 2033

- €34.99 billion compliance software market in 2025, growing to €38.36 billion in 2026

- €3.3 billion market forecast for artificial intelligence in RegTech by 2026

- 36.1% annual growth rate for AI in RegTech market

- 69.23% cloud deployment share in compliance software market in 2025

- 57.14% large enterprise share in compliance software market in 2025

- Countries leading in regulation: EU (most comprehensive), US (state-level patchwork), China (strict but centralised), Canada (proposed AI and Data Act)

- Compliance percentages: Only 13% of companies actively test for bias, but 77% have bias-testing tools in place

- Proposed vs enacted regulations: Hundreds of AI bills proposed in 2025, with approximately 100 becoming law

- Enforcement budgets: Growing significantly as agencies hire AI experts and build enforcement capabilities

Language Bias in AI

Your voice should work the same as everyone else’s when you talk to AI. But that’s not how it plays out. AI systems favour some languages, accents, and speech patterns while quietly failing others. If you don’t sound like the data they were trained on, the system works against you.

The numbers make this clear:

- Large language models show biased behaviour in around 40% of text quality evaluations across major benchmarks

- High-resource languages like English dominate AI training, while low-resource languages are consistently underrepresented

- 36.44% of AI bias research focuses on English, compared to just 7.45% on Spanish and 6.38% on French

- Multilingual models default to English viewpoints when responding to low-resource languages like Sanskrit

- Benchmark datasets such as IndiBias show higher intersectional bias across 10 leading language models when English and Hindi are compared

- Models trained with balanced multilingual data show lower bias levels than monolingual or English-heavy models

This isn’t just about bad translations or awkward phrasing. When AI prioritises certain languages, it decides who gets accurate answers and who gets ignored. Speakers of low-resource languages receive incomplete, distorted, or irrelevant responses.

Information collapses into an English-centric view of the world. Local context disappears. Entire communities get locked out of AI benefits not because they lack knowledge, but because their language was never treated as important enough to train on.

AI doesn’t struggle with language by accident. It reflects the choices made about which voices were worth listening to in the first place.

How Companies Are Trying to Reduce AI Bias

Businesses are starting to realise biased algorithms cost real money – lawsuits, lost talent, and damaged reputations. That’s why corporate responsibility around AI bias is growing, even if progress feels slow.

Companies are taking different approaches. Some focus on technical fixes like better testing tools. Others work on team diversity to catch biases early. The most forward-thinking companies combine both approaches. They understand that fixing AI bias isn’t just about better algorithms – it’s about better processes and people too.

Here’s what companies are actually doing to tackle AI bias:

- 40% of companies now collect and analyse diversity metrics across their HR processes, according to industry research. This helps them spot patterns before they become problems.

- 35% conduct regular audits of their HR processes to identify and address bias. These audits check everything from resume screening to promotion decisions.

- 32% ensure diverse representation in their talent pipelines. They’re not just fixing algorithms – they’re fixing the data going into those algorithms.

- 19% partner with external organisations to support diverse HR strategies. Sometimes you need outside help to see your own blind spots.

- 51% implement diverse hiring panels to reduce individual biases. More perspectives mean fewer missed problems.

- 36% develop standardised, bias-free job descriptions and interview questions. They’re removing bias at the very beginning of the hiring process.

- 35% utilise AI-driven tools specifically designed to eliminate bias. Yes, they’re using AI to fix AI – it’s a bit meta, but it works.

- 27% provide regular bias training for HR staff and hiring managers. Technology alone can’t solve human problems.

- Companies are investing heavily in bias mitigation, with the AI regulatory compliance market reaching €34.99 billion in 2025 and growing to €38.36 billion in 2026.

- The bias and fairness management segment is growing at 28.55% annually through 2031, showing increasing corporate investment.

- Only 13% of companies actively test for bias in their AI systems, but 77% have bias-testing tools in place. The gap between having tools and using them properly is where problems happen.

- Companies that do test properly see significant ROI – bias reduction initiatives can prevent lawsuits averaging $365,000 to over $2 million per incident.

- Diversity in AI teams matters – companies with more diverse development teams catch 40% more bias issues before deployment.

- The market for bias solution tools reached $1.31 billion in 2024 and is projected to hit $6.65 billion by 2033, growing at 18.60% annually.

- AI in RegTech (regulatory technology) is forecast to reach $3.3 billion by 2026, growing at 36.1% annually as companies try to stay compliant.

- Success rates vary – companies with formal AI bias strategies report 80% success in bias reduction, compared to 37% for those without strategies.

- Most common mitigation techniques include diverse training data (adopted by 45% of companies), regular audits (35%), and explainable AI tools (28%).

The progress might feel slow, but it’s happening. Companies that used to ignore AI bias now face real consequences – lawsuits, regulatory fines, and public backlash. That financial pressure is driving change faster than ethical concerns alone ever could.

Sources

- https://www.pewresearch.org/internet/2025/04/03/how-the-us-public-and-ai-experts-view-artificial-intelligence/

- https://jier.org/index.php/journal/article/view/4290

- https://www.ibm.com/think/insights/cost-of-poor-data-quality

- https://www.tredence.com/blog/ai-bias

- https://kodexolabs.com/bias-in-ai/

- https://www.mcknightsseniorliving.com/news/hiring-bias-from-ai-powered-selection-tool-costs-company-365000-in-settlement/

- https://www.quinnemanuel.com/the-firm/publications/when-machines-discriminate-the-rise-of-ai-bias-lawsuits/

- https://lailluminator.com/2024/10/12/ai-bias/

- https://ipwatchdog.com/2025/10/02/ai-training-data-watershed-1-5-billion-anthropic-settlement/

- https://www.mofo.com/resources/insights/260127-ai-trends-for-2026-ai-and-algorithmic-bias

- https://www.aiprm.com/bias-in-ai/

- https://storm.cis.fordham.edu/gweiss/papers/Race-Gender-Bias-COMPSAC2025.pdf

- https://www.washington.edu/news/2024/10/31/ai-bias-resume-screening-race-gender/

- https://reports.weforum.org/docs/WEF_GGGR_2025.pdf

- https://www.aboutchromebooks.com/ai-algorithm-bias-detection-rates-by-demographic/

- https://www.humanrightsresearch.org/post/artificial-intelligence-and-racial-justice-in-the-criminal-system

- https://idtechwire.com/study-finds-racial-bias-in-leading-speech-recognition-systems-903246/

- https://utahnewsdispatch.com/2024/10/13/ai-perpetuating-historic-biases-mortgage-loans/

- https://www.lendingtree.com/home/mortgage/lendingtree-study-black-homebuyers-more-likely-to-be-denied-mortgages-than-other-homebuyers/

- https://apnews.com/article/artificial-intelligence-ai-lawsuit-discrimination-bias-1bc785c24a1b88bd425a8fa367ab2b23

- https://news.bloomberglaw.com/artificial-intelligence/ais-racial-bias-claims-tested-in-court-as-us-regulations-lag

- https://www.forbes.com/sites/sheilacallaham/2025/11/25/in-2026-age-bias-will-become-impossible-for-employers-to-ignore/

- https://www.tricitiesbusinessnews.com/articles/2528

- https://www.aarp.org/advocacy/older-workers-fear-age-discrimination-2025/

- https://news.stanford.edu/stories/2025/10/ai-llms-age-bias-older-working-women-research

- https://nypost.com/2025/10/10/tech/new-gpt-models-show-major-drop-in-political-bias-openai-says/

- https://portal.fgv.br/en/news/chatgpt-political-bias-study-features-stanford-university-ai-report

- https://pbswisconsin.org/news-item/ai-media-manipulation-and-political-campaign-lies-in2024/

- https://www.brandonjbroderick.com/medical-malpractice-2025-how-ai-healthcare-changing-lawsuits

- https://pmc.ncbi.nlm.nih.gov/articles/PMC12615213/

- https://www.painmedicinenews.com/Science-and-Technology/Article/03-25/AI-in-Healthcare-Perpetuates-Race-Based-Pain-Treatment-Bias/76497

- https://www.expertinstitute.com/resources/insights/top-medical-malpractice-verdicts/

- https://sanfordheisler.com/blog/2025/12/ai-bias-in-hiring-algorithmic-recruiting-and-your-rights/

- https://www.hrdive.com/news/ai-hiring-process/758384/

- https://www.demandsage.com/ai-recruitment-statistics/

- https://responsibleailabs.ai/knowledge-hub/articles/ai-hiring-bias-legal-cases

- https://publicpolicy.cornell.edu/masters-blog/what-americans-really-think-about-ai-algorithms-public-confidence-and-transparency-in-government/

- https://www.forbes.com/councils/forbestechcouncil/2025/03/10/addressing-ai-bias-strategies-companies-must-adopt-now/

A startup consultant, digital marketer, traveller, and philomath. Aashish has worked with over 20 startups and successfully helped them ideate, raise money, and succeed. When not working, he can be found hiking, camping, and stargazing.